Please complete the details below then remove this line:

Domino/Notes Version: 14.5

Add-on Product (if appropriate, e.g. Verse / Traveler / Nomad / Domino REST API):

Its Version:

Operating System: Windows, Linux - it doesn’t matter

Client (Notes, Nomad Web, Nomad Mobile, Android/iOS, browser version): Notes, Browser

Problem/Query:

Business partner deployed Domino v14.5 and also Domino IQ. They successfully did installation & setup regarding Domino IQ and also they can do test the email summarizing feature with Domino IQ.

But they found a couple of problem / strange situation.

- high usage of CPU resource doing test of email summarizing feature

- slow response of email summarizing feature

The deployment environment H/W spec is so good and GPU capacity is also high. So we don’t think this is related H/W spec.

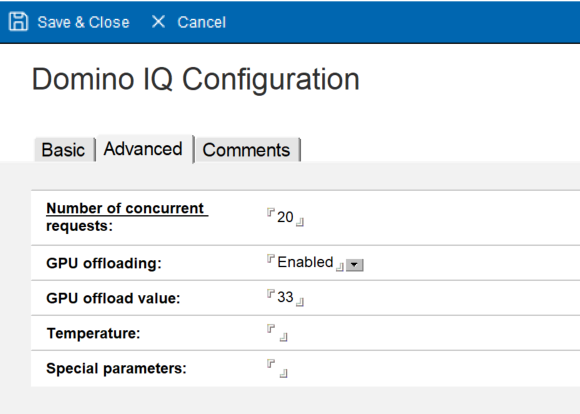

They found the this document and GPU offloading.

https://help.hcl-software.com/domino/14.5.0/admin/conf_add_dom_iq_config_document.html

They tried to change the value of GPU offload from 33 to 80. They got the fast response and saw the low usage of CPU.

What is the GPU offloading?

Is there any other document about performance tuning of GPU offloading?

tl;dr GPU offloading means GPU is preferred for LLM requests and the CPU can be used for other things, important if Domino (and potentially other) traditional IT products are running on the same host. The GPU offload value determines the number of layers loaded into the GPU’s memory, so the right answer will depend on what it can cope with and what’s needed.

Here’s the answer about GPU offloading I got when I asked AI:

GPU offloading is the process of delegating computationally intensive tasks from a central processing unit (CPU) to a graphics processing unit (GPU). The GPU’s massively parallel architecture, with thousands of specialized cores, makes it highly efficient at handling calculations that can be broken down into many smaller, simultaneous operations.

How GPU offloading works

The process involves a handoff of tasks and data from the host CPU to the GPU. For this to work, the application must be designed to identify parallelizable code sections that can benefit from the GPU’s architecture. The typical offloading workflow is as follows:

-

Identify workload: The application identifies compute-intensive parts of its code that are suitable for parallel processing, such as matrix multiplications or rendering calculations.

-

Data transfer: The necessary data is copied from the CPU’s main system memory (RAM) to the GPU’s dedicated memory (VRAM).

-

Kernel execution: The GPU is instructed to execute a “kernel,” which is a program containing the parallelized code. The GPU launches many instances of this kernel simultaneously on its different cores.

-

Computation: The GPU performs the calculations much faster than a CPU could for this type of workload.

-

Result transfer: Once the computation is complete, the results are copied back from the GPU’s VRAM to the CPU’s RAM for the rest of the application to use.

Benefits of GPU offloading

-

Improved performance: The primary benefit is a significant speedup for applications that rely on parallel computations, such as scientific simulations, data analytics, and machine learning.

-

Reduced CPU load: By moving heavy workloads to the GPU, the CPU is freed up to handle other tasks, which improves overall system responsiveness.

-

Efficient resource utilization: Offloading allows a computer to make the best use of all its available hardware resources, leveraging the parallel strength of the GPU and the serial strength of the CPU.

-

Memory management: Some advanced offloading techniques, particularly for large language models (LLMs), can dynamically move parts of a model between GPU and system memory to run models larger than the GPU’s VRAM would normally allow.

Common use cases

-

Artificial intelligence: Training and inference for machine learning and deep learning models is a common application, as these workloads involve massive, parallel matrix operations.

-

Large language models (LLMs): Offloading is used to manage the memory requirements of large models. Layers or parts of the model that don’t fit into VRAM are moved to system RAM, allowing larger models to run on consumer-grade hardware.

-

Scientific computing: Complex mathematical and physics simulations, like those in computational fluid dynamics, use GPUs to accelerate calculations.

-

Video games and rendering: The entire field of 3D graphics depends on offloading the rendering workload from the CPU to the GPU for real-time visual output.

-

Video processing: Tasks such as encoding, decoding, and special effects rendering are often offloaded to the GPU for faster processing.

When I asked what “GPU offload value” might mean, it gave this response:

In the context of running large language models (LLMs) locally, a “GPU offload value” represents the number of model layers that will be loaded into the GPU’s video memory (VRAM) for faster processing. The remaining layers are processed by the CPU, often much more slowly.

A large language model is composed of many layers of a neural network. A model that has, for example, 33 layers, can be configured with an integer value to determine how many of those layers run on the GPU.

1 Like